We build purpose-built AI products for advanced users navigating high-stakes, complex decisions. Our products operate at the strategic layer, not solving a specific operational problem, but addressing the foundational one: the inability to reason clearly about an interconnected, multidimensional world in an information environment optimized to prevent exactly that kind of thinking.

Current pipeline:

PDI™ (Personal Director of Intelligence): Decision support for executives and strategists navigating macro uncertainty. Daily intelligence filtered by role, framed for decisions. Geopolitics, technology, capital markets that are synthesized, not aggregated. Early access available.

LibrosRX™: Precision reading recommendations for cognitive restoration. Built on the premise that what you read shapes how you think, and that thinking well requires deliberate input, not algorithmic noise.

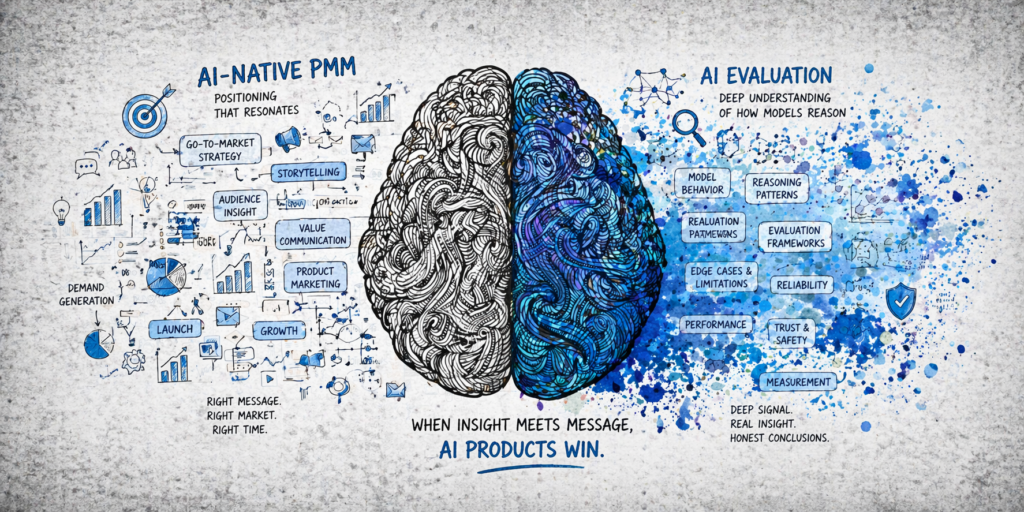

Marketer’s LaunchPad™: AI-native GTM copilot for product marketing teams. The Chief of Staff for your launch that drafts, tracks, nudges, and knows when Finance is the bottleneck. Built for teams who want AI as a co-creator, not a task-runner.

→ Inquire about early access or partnership → contact@terra30.com